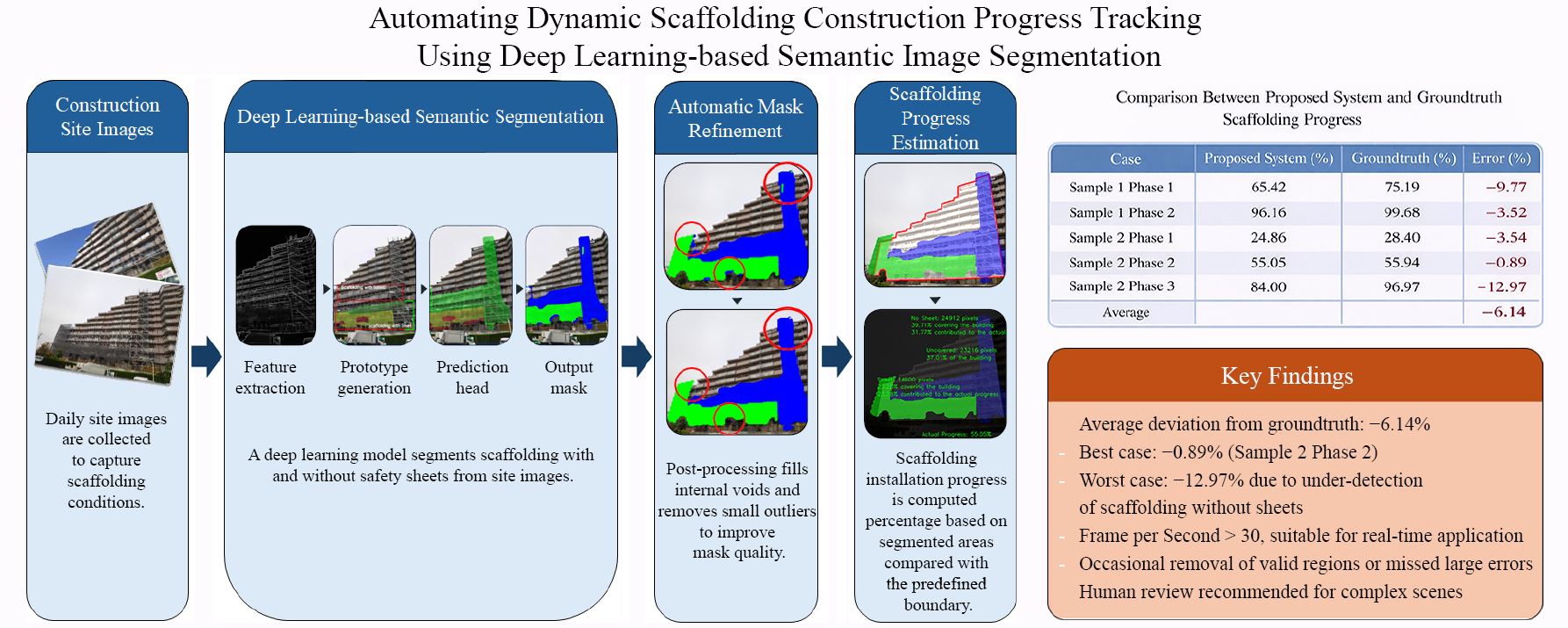

Automating Dynamic Scaffolding Construction Progress Tracking Using Deep Learning-based Semantic Image Segmentation

Main Article Content

Abstract

Scaffolding plays a critical role in construction by providing safe access to elevated work areas, yet it is often overlooked in progress monitoring and omitted from reports and building information models (BIM) due to its temporary and dynamic nature. This study presents a deep learning–based semantic segmentation system for tracking scaffolding installation progress, distinguishing between scaffolding with and without safety sheets. A custom dataset of annotated site images was used to train the model, and performance was evaluated on both validation and test sets. The system achieved real-time processing speeds of 33.81 Frame Per Second (FPS) (validation) and 31.28 FPS (test), with mean Average Precision scores of 0.496 and 0.480, respectively. Class-specific results showed consistently higher accuracy for scaffolding with safety sheets, with peak Intersection over Union (IoU) values exceeding 93% in a case study time point. Two multi-time point construction case studies demonstrated the system’s robustness across varying site conditions. An automatic mask modification algorithm was applied to address missed detections, improving IoU by up to 3.10% in challenging scenarios. The calculated progress, based on segmented masks and predefined building boundaries, was compared with groundtruth measurements, confirming the system’s capability for quantitative progress tracking. Results indicate that scaffolding with safety sheets is more reliably detected, while detection of scaffolding without sheets remains more challenging. The proposed method offers a practical tool for reducing inspection workload, improving safety compliance, and enabling more comprehensive progress tracking in construction projects, particularly in scenarios where scaffolding installation is a major operational activity.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

References

Bec, J., Blazik-Borowa, E., & Szer, J. (2023). Analysis of scaffolding harmonic excitation. Bulletin of the Polish Academy of Sciences Technical Sciences, Article 144577. https://journals.pan.pl/dlibra/publication/144577/edition/126209/content

Błazik-Borowa, E., Robak, A., Pieńko, M., & Czepiżak, D. (2023). The measurement of axial forces in scaffolding standards. Measurement: Journal of the International Measurement Confederation, 223, Article 113770. https://doi.org/10.1016/j.measurement.2023.113770

Bolya, D., Zhou, C., Xiao, F., & Lee, Y. J. (2019). YOLACT: Real-time instance segmentation. Proceedings of the IEEE International Conference on Computer Vision, 2019, 9156–9165. https://doi.org/10.1109/ICCV.2019.00925

Bradski, G. (2000). The openCV library. Dr. Dobb’s Journal of Software Tools, 25(11), 120–123.

Chern, W. C., Kim, T., Asari, V. K., & Kim, H. (2024). Image hashing-based shallow object tracker for construction worker monitoring in scaffolding scenes. Automation in Construction, 166, Article 105604. https://doi.org/10.1016/j.autcon.2024.105604

Choo, H., Lee, B., Kim, H., & Choi, B. (2023). Automated detection of construction work at heights and deployment of safety hooks using IMU with a barometer. Automation in Construction, 147, Article 104714. https://doi.org/10.1016/j.autcon.2022.104714

Chua, W. P., & Cheah, C. C. (2024). Deep-learning-based automated building construction progress monitoring for prefabricated prefinished volumetric construction. Sensors, 24(21), Article 7074. https://doi.org/10.3390/s24217074

Ding, Y., Liu, M., Zhang, M., & Luo, X. (2025). Removing visual occlusion of construction scaffolds via a two-step method combining semantic segmentation and image inpainting. Engineering Applications of Artificial Intelligence, 142, Article 109983. https://doi.org/10.1016/j.engappai.2024.109983

Dung, C. V., & Anh, L. D. (2019). Autonomous concrete crack detection using deep fully convolutional neural network. Automation in Construction, 99, 52–58. https://doi.org/10.1016/j.autcon.2018.11.028

Dzeng, R. J., Cheng, C. W., & Cheng, C. Y. (2024). A scaffolding assembly deficiency detection system with deep learning and augmented reality. Buildings, 14(2), Article 385. https://doi.org/10.3390/buildings14020385

Ekanayake, B., Wong, J. K. W., Fini, A. A. F., Smith, P., & Thengane, V. (2024). Deep learning-based computer vision in project management: Automating indoor construction progress monitoring. Project Leadership and Society, 5, Article 100149. https://doi.org/10.1016/j.plas.2024.100149

Garcia-Garcia, A., Gomez-Donoso, F., Garcia-Rodriguez, J., Orts-Escolano, S., Cazorla, M., & Azorin-Lopez, J. (2016). PointNet: A 3D convolutional neural network for real-time object class recognition. Proceedings of the International Joint Conference on Neural Networks, 2016, 1578–1584. https://doi.org/10.1109/IJCNN.2016.7727386

Golparvar-Fard, M., Peña-Mora, F., & Savarese, S. (2015). Automated progress monitoring using unordered daily construction photographs and IFC-based Building Information Models. Journal of Computing in Civil Engineering, 29(1), Article 04014025. https://doi.org/10.1061/(ASCE)CP.1943-5487.0000205

Goodfellow, I., Bengio, Y., & Courville, A. (2016). Deep learning. MIT Press. http://www.deeplearningbook.org/

Guan, H., Su, Y., Sun, X., Xu, G., Li, W., Ma, Q., Wu, X., Wu, J., Liu, L., & Guo, Q. (2020). A marker-free method for registering multi-scan terrestrial laser scanning data in forest environments. ISPRS Journal of Photogrammetry and Remote Sensing, 166, 82–94. https://doi.org/10.1016/j.isprsjprs.2020.06.002

Gupta, S., & Gupta, A. (2019). Dealing with noise problem in machine learning data-sets: A systematic review. Procedia Computer Science, 161, 466–474. https://doi.org/10.1016/j.procs.2019.11.146

Hamledari, H., McCabe, B., Davari, S., & Shahi, A. (2017). Automated schedule and progress updating of IFC-based 4D BIMs. Journal of Computing in Civil Engineering, 31(4), Article 04017012. https://doi.org/10.1061/(ASCE)CP.1943-5487.0000660

Hannan Qureshi, A., Alaloul, W. S., Wing, W. K., Saad, S., Ammad, S., & Musarat, M. A. (2022). Factors impacting the implementation process of automated construction progress monitoring. Ain Shams Engineering Journal, 13(6), Article 101808. https://doi.org/10.1016/j.asej.2022.101808

Harris, C. R., Millman, K. J., der Walt, S. J., Gommers, R., Virtanen, P., Cournapeau, D., Wieser, E., Taylor, J., Berg, S., Smith, N. J., Kern, R., Picus, M., Hoyer, S., van Kerkwijk, M. H., Brett, M., Haldane, A., del Río, J., Wiebe, M., Peterson, P., … Oliphant, T. E. (2020). Array programming with NumPy. Nature, 585, 357–362. https://doi.org/10.1038/s41586-020-2649-2

He, K., Gkioxari, G., Dollár, P., & Girshick, R. (2017). Mask R-CNN. 2017 IEEE International Conference on Computer Vision (ICCV) (pp. 2980–2988). https://doi.org/10.1109/ICCV.2017.322

Hou, L., Zhao, C., Wu, C., Moon, S., & Wang, X. (2017). Discrete firefly algorithm for scaffolding construction scheduling. Journal of Computing in Civil Engineering, 31(3), 1–15. https://doi.org/10.1061/(ASCE)CP.1943-5487.0000639

Hu, Q., Yang, B., Xie, L., Rosa, S., Guo, Y., Wang, Z., Trigoni, N., & Markham, A. (2019). RandLA-Net: Efficient semantic segmentation of large-scale point clouds. Arxiv. http://arxiv.org/abs/1911.11236

Hui, L., Park, M., & Brilakis, I. (2014). Automated in-place brick counting for facade construction progress estimation. Computing in Civil and Building Engineering, 29(6), 958–965. https://doi.org/10.1061/(ASCE)CP.1943-5487.0000423

Hüthwohl, P., & Brilakis, I. (2018). Detecting healthy concrete surfaces. Advanced Engineering Informatics, 37, 150–162. https://doi.org/10.1016/j.aei.2018.05.004

Kavaliauskas, P., Fernandez, J. B., McGuinness, K., & Jurelionis, A. (2022). Automation of construction progress monitoring by integrating 3D point cloud data with an IFC-based BIM model. Buildings, 12(10), Article 1754. https://doi.org/10.3390/buildings12101754

Khosakitchalert, C., & Seghier, T. E. (2025). Evaluating and optimizing acoustical reverberation time and material cost for classrooms using Building Information Modeling (BIM) and Generative Design (GD) tools. Nakhara: Journal of Environmental Design and Planning, 24(1), Article 504. https://doi.org/10.54028/NJ202524504

Khosakitchalert, C., & Yabuki, N. (2023). Conversion challenges: A case study of converting a post and lintel structure to a precast concrete structure using Building Information Modeling (BIM). Nakhara: Journal of Environmental Design and Planning, 22(3), Article 316. https://doi.org/10.54028/NJ202322316

Kim, H., & Kim, C. (2020). Deep-learning-based classification of point clouds for bridge inspection. Remote Sensing, 12(22), Article 3757. https://doi.org/10.3390/rs12223757

Kim, J., Chung, D., Kim, Y., & Kim, H. (2022). Deep learning-based 3D reconstruction of scaffolds using a robot dog. Automation in Construction, 134, Article 104092. https://doi.org/10.1016/j.autcon.2021.104092

Kim, K., Cho, Y. K., & Kim, K. (2018a). BIM-based decision-making framework for scaffolding planning. Journal of Management in Engineering, 34(6), 1–37. https://doi.org/10.1061/(ASCE)ME.1943-5479.0000656

Kim, K., Cho, Y., & Kim, K. (2018b). BIM-driven automated decision support system for safety planning of temporary structures. Journal of Construction Engineering and Management, 144(8), 1–11. https://doi.org/10.1061/(ASCE)CO.1943-7862.0001519

Kirillov, A., Mintun, E., Ravi, N., Mao, H., Rolland, C., Gustafson, L., Xiao, T., Whitehead, S., Berg, A. C., Lo, W.-Y., Dollár, P., & Girshick, R. (2023). Segment anything. Arxiv. http://arxiv.org/abs/2304.02643

Lin, P., Lin, J. J., & Hsieh, S. (2023). Construction site scaffolding completeness detection based on mask R- CNN and hough transform. 30th EG-ICE: International Conference on Intelligent Computing in Engineering (pp. 1–10). https://doi.org/10.48550/arXiv.2503.14716

Lin, T. Y., Maire, M., Belongie, S., Hays, J., Perona, P., Ramanan, D., Dollár, P., & Zitnick, C. L. (2014). Microsoft COCO: Common objects in context. ArXiv. https://arxiv.org/abs/1405.0312

Mani, G. F., Feniosky, P. M., & Savarese, S. (2009). D4AR-A 4-dimensional augmented reality model for automating construction progress monitoring data collection, processing and communication. Electronic Journal of Information Technology in Construction, 14, 129–153. https://www.itcon.org/papers/2009_13.content.06965.pdf

Matarneh, S., Elghaish, F., Pour Rahimian, F., Abdellatef, E., & Abrishami, S. (2024). Evaluation and optimisation of pre-trained CNN models for asphalt pavement crack detection and classification. Automation in Construction, 160, Article 105297. https://doi.org/10.1016/j.autcon.2024.105297

Omara, H., Mahdjoubia, L., & Kheder, G. (2018). Towards an automated photogrammetry-based approach for monitoring and controlling construction site activities. Computers in Industry Journal, 98, 172–182. https://doi.org/10.1016/j.compind.2018.03.012

Oquab, M., Darcet, T., Moutakanni, T., Vo, H., Szafraniec, M., Khalidov, V., Fernandez, P., Haziza, D., Massa, F., El-Nouby, A., Assran, M., Ballas, N., Galuba, W., Howes, R., Huang, P.-Y., Li, S.-W., Misra, I., Rabbat, M., Sharma, V., … Bojanowski, P. (2023). DINOv2: Learning robust visual features without supervision. Arxiv. http://arxiv.org/abs/2304.07193

Paoletti, M. E., Haut, J. M., Plaza, J., & Plaza, A. (2019). Deep learning classifiers for hyperspectral imaging: A review. ISPRS Journal of Photogrammetry and Remote Sensing, 158, 279–317. https://doi.org/10.1016/j.isprsjprs.2019.09.006

Park, M., Tran, D. Q., Bak, J., & Park, S. (2023). Small and overlapping worker detection at construction sites. Automation in Construction, 151, Article 104856. https://doi.org/10.1016/j.autcon.2023.104856

Peng, S., Jiang, W., Pi, H., Li, X., Bao, H., & Zhou, X. (2020). Deep snake for real-time instance segmentation. Proceedings of the IEEE Computer Society Conference on Computer Vision and Pattern Recognition (pp. 8530–8539). https://doi.org/10.1109/CVPR42600.2020.00856

Redmon, J., Divvala, S., Girshick, R., & Farhadi, A. (2015). You only look once: Unified, real-time object detection. 2016 IEEE Conference on Computer Vision and Pattern Recognition (pp. 779–788). https://doi.org/10.1109/CVPR.2016.91

Russell, B. C., Torralba, A., Murphy, K. P., & Freeman, W. T. (2008). LabelMe: A database and web-based tool for image annotation. International Journal of Computer Vision, 77, 157–173. https://doi.org/10.1007/s11263-007-0090-8

Sacks, R., Eastman, C., Lee, G., & Teicholz, P. (2018). BIM handbook: A guide to Building Information Modeling for owners, managers, designers, engineers, and contractors (third). John Wiley & Sons.

Saovana, N., Yabuki, N., & Fukuda, T. (2020). Development of an unwanted-feature removal system for Structure from Motion of repetitive infrastructure piers using deep learning. Advanced Engineering Informatics, 46, Article 101169. https://doi.org/10.1016/j.aei.2020.101169

Saovana, N., Yabuki, N., & Fukuda, T. (2021). Automated point cloud classification using an image-based instance segmentation for structure from motion. Automation in Construction, 129, Article 103804. https://doi.org/10.1016/j.autcon.2021.103804

Tochaiwat, K., & Seniwong, P. (2025). Sales rate prediction for condominiums in the Bangkok Metropolitan Region using deep learning: Identification of determinants and model validation. Nakhara: Journal of Environmental Design and Planning, 24(1), Article 502. https://doi.org/10.54028/NJ202524502

van der Walt, S., Schönberger, J. L., Nunez-Iglesias, J., Boulogne, F., Warner, J. D., Yager, N., Gouillart, E., Yu, T., & the scikit-image contributors. (2014). Scikit-image: Image processing in Python. PeerJ, 2, Article e453. https://doi.org/10.7717/peerj.453

Virtanen, P., Gommers, R., Oliphant, T. E., Haberland, M., Reddy, T., Cournapeau, D., Burovski, E., Peterson, P., Weckesser, W., Bright, J., Walt, S. J. van der, Brett, M., Wilson, J., Millman, K. J., Mayorov, N., Nelson, A. R. J., Jones, E., Kern, R., Larson, E., … Mulbregt, P. van. (2020). SciPy 1.0: Fundamental algorithms for scientific computing in Python. Nature Methods, 17(3), 261–272. https://www.nature.com/articles/s41592-019-0686-2

Wang, B., Chen, Z., Li, M., Wang, Q., Yin, C., & Cheng, J. C. P. (2024). Omni-scan2BIM: A ready-to-use scan2BIM approach based on vision foundation models for MEP scenes. Automation in Construction, 162, Article 105384. https://doi.org/10.1016/j.autcon.2024.105384

Xu, Y., Tuttas, S., Hoegner, L., & Stilla, U. (2018). Reconstruction of scaffolds from a photogrammetric point cloud of construction sites using a novel 3D local feature descriptor. Automation in Construction, 85, 76–95. https://doi.org/10.1016/j.autcon.2017.09.014

Yang, F., Odashima, S., Masui, S., & Jiang, S. (2023). Hard to track objects with irregular motions and similar appearances? Make it easier by buffering the matching space. Proceedings - 2023 IEEE Winter Conference on Applications of Computer Vision, WACV 2023 (pp. 4788–4797). https://doi.org/10.1109/WACV56688.2023.00478

Yin, Z., & Caldas, C. (2022). Scaffolding in industrial construction projects: Current practices, issues, and potential solutions. International Journal of Construction Management, 22(13), 2554–2563. https://doi.org/10.1080/15623599.2020.1808562