Hybrid WangchanBERTa Architectures for Multi-Class Thai Sentiment Analysis

Main Article Content

Abstract

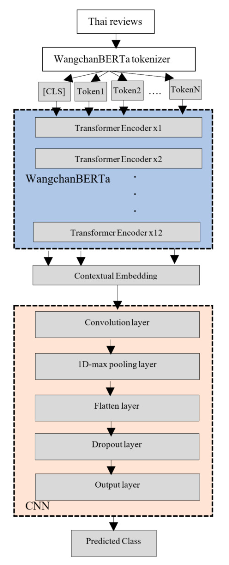

The rapid growth of the restaurant industry in Thailand has intensified the importance of online reviews, which significantly shape customer perceptions and influence business performance. Sentiment analysis has emerged as an effective computational approach for extracting customer opinions from such reviews; however, multi-class sentiment classification in Thai remains challenging due to the language's non-segmented structure and the issue of class imbalance. This study investigates three hybrid deep learning modelsWangchanBERTa-MLP, WangchanBERTa- CNN, and WangchanBERTa-BiLSTMby integrating WangchanBERTa, a Thai-specific pre-trained language model, with different neural architectures. Using a balanced dataset of restaurant reviews obtained through SMOTE, the models were evaluated based on accuracy, precision, recall, and F1-score. The experimental results show that WangchanBERTa- BiLSTM performed the best overall, achieving an accuracy of 85.22% and significantly improving the classification of neutral and positive sentiments compared to the BERT-based models and other hybrid methods.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

References

K. Zahoor, N. Z. Bawany and S. Hamid, “Sentiment Analysis and Classification of Restaurant Reviews using Machine Learning,” in Proceedings of 21st International Arab Conference on Information Technology (ACIT), pp. 1-6, 2020.

M. Alhadethy, M. Hamad and R. Adnan Jaleel, “Sentiment Analysis of Restaurant Reviews in Social Media using Na¨ıve Bayes,” Systems Analysis Modelling Simulation, vol. 5, pp.166-172, 2021.

D. R. Patil, D. Shukla, A. Kumar, Y. Rajanak and Y. P. Singh, “Machine Learning for Sentiment Analysis and Classification of Restaurant Reviews,” in Proceedings of 3rd International Conference on Computing, Analytics and Networks (ICAN), pp. 1-5, 2020.

R. Obiedat, R. Qaddoura, A. Al-Zoubi, L. AlQaisi, O. Harfoushi, M. Alrefai and H. Faris, “Sentiment Analysis of Customers’ Reviews Using a Hybrid Evolutionary SVM-Based Approach in an Imbalanced Data Distribution,” IEEE Access, vol. 10, pp. 22260-22273, 2022.

B. Gedif, A. Alemu, Y. Assefa and S. Nibret, “Design Amharic Text Sentiment Analysis Model Using Machine Learning Techniques. In Case of Restaurant Reviews,” in Proceedings of International Conference on Information and Communication Technology for Development for Africa (ICT4DA), pp. 150-154, 2023.

R. Chauhan, S. Bhandari and H. Vaidya, “Sentiment Analysis of Restaurant Reviews Using Machine Learning Algorithms,” in Proceedings of 3rd International Conference on Innovative Sustainable Computational Technologies (CISCT), pp. 1-5, 2023.

N. Hossain, M. R. Bhuiyan, Z. N. Tumpa and S. A. Hossain, “Sentiment Analysis of Restaurant Reviews using Combined CNN-LSTM,” in Proceedings of 11th International Conference on Computing, Communication and Networking Technologies (ICCCNT), pp. 1-5, 2020.

A. Candra and A. Wicaksana, “Bidirectional Encoder Representations from Transformers for Cyberbullying Text Detection in Indonesian Social Media,” International Journal of Innovative Computing, Information and Control, Vol. 17, No. 5, pp. 1599-1615, 2021.

D. Chaudhari, S. Angadi, S. Sable, U. Patil, D. Chaudhari and K. Jadhav, “Sentimental Analysis on Zomato Restaurant Reviews using BiLSTM,” in Proceedings of International Conference on Integrated Intelligence and Communication Systems (ICIICS), pp. 1-7, 2023.

J. Mutinda, W. Mwangi and G. Okeyo, “Sentiment Analysis of Text Reviews Using LexiconEnhanced Bert Embedding (LeBERT) Model with Convolutional Neural Network,” Applied Sciences (Switzerland), vol. 13, no. 3, p. 1445, 2023.

S. Hammi, S. M. Hammami and L. H. Belguith, “Advancing Aspect-based Sentiment Analysis with a Novel Architecture Combining Deep Learning Models, CNN, and Bi-RNN with the Machine Learning Model SVM,” Social Network Analysis and Mining, vol. 13, no. 1, 2023.

P. Songram, I. Ritthisit, C. Jareanpon and N. Muangnak, “A Comparative Study of Machine Learning and Deep Learning Models for Sentiment Analysis on Thai Restaurant Reviews,” ICIC Express Letters, Part B: Applications, vol. 16, no. 4, pp. 379-386, 2025.

Y. Wu, Z. Jin, C. Shi, P. Liang and T. Zhan, “Research on the Application of Deep Learning-based BERT Model in Sentiment Analysis,” Applied and Computational Engineering, vol. 71, pp. 14-20, 2024.

I. Ritthisit, P. Songram, J. Muangprathub and L. Boongasame, “Thai BERT Models for Sentiment Analysis on Thai Restaurant Reviews,” ICIC Express Letters, vol. 19, no. 8, pp. 909-916, 2025.

A. Vaswani, N. Shazeer, N. Parmar, J. Uszkoreit, L. Jones, A. N. Gomez, L. Kaiser and I. Polosukhin, “Attention is All You Need,” in Proceedings of the 31st International Conference on Neural Information Processing Systems, pp. 111, 2017.

J. Devlin, M.-W. Chang, K. Lee and K. Toutanova, “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding,” arXiv preprint arXiv:1810.04805, 2019.

S. Wu and M. Dredze, “Beto, Bentz, Becas: The Surprising Cross-lingual Effectiveness of BERT,” in Proceedings of the 2019 Conference on Empirical Methods in Natural Language Processing, pp. 833–844, 2019.

L. Zhuang, L. Wayne, S. Ya and Z. Jun, “A Robustly Optimized BERT Pre-training Approach with Post-training,” in Proceedings of the 20th Chinese National Conference on Computational Linguistics, pp. 1218-1227, 2021.

Z. Lan, M. Chen, S. Goodman, K. Gimpel, P. Sharma and R. Soricut, “ALBERT: A Lite BERT for Self-supervised Learning of Language Representations,” in Proceedings of International Conference on Learning Representations, pp.1-17, 2020.

A. Conneau, K. Khandelwal, N. Goyal, V. Chaudhary, G. Wenzek, F. Guzm´an, E. Grave, M. Ott, L. Zettlemoyer and V. Stoyanov, “Unsupervised Cross-lingual Representation Learning at Scale,” in Proceedings of the 58th Annual Meeting of the Association for Computational Linguistics, pp. 8440-8451, 2020.

K. Clark, M.-T. Luong, Q. V. Le and C. D. Manning, “ELECTRA: Pre-training Text Encoders as Discriminators Rather than Generators,” in Proceedings of International Conference on Learning Representations, pp.1-18, 2020.

L. Lowphansirikul, C. Polpanumas, N. Jantrakulchai and S. Nutanong, “WangchanBERTa: Pre-training Transformer-based Thai Language Models,” arXiv preprint arXiv:2101.09635, 2021.

D. Gurgurov, T. B¨aumel and T. Anikina, “Multilingual Large Language Models and Curse of Multilinguality,” arXiv preprint arXiv:2406.10602, 2024.

T. A. Chang, C. Arnett, Z. Tu and B. Bergen, “When Is Multilinguality a Curse? Language Modeling for 250 Highand Low-Resource Languages,” in Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pp. 4074-4096, 2024.

T. BlevinsT. Blevins, T. Limisiewicz, S. Gururangan, M. Li, H. Gonen, N. A. Smith and L. Zettlemoyer, “Breaking the Curse of Multilinguality with Cross-lingual Expert Language Models,” in Proceedings of the 2024 Conference on Empirical Methods in Natural Language Processing, pp. 10822-10837, 2024.

Z. Zhao and N. Aletras, “Comparing Explanation Faithfulness between Multilingual and Monolingual Fine-tuned Language Models,” in Proceedings of the 2024 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, vol. 1, pp. 3226-3244, 2024.

M. Rahman, A. I. Shiplu, Y. Watanobe and M. A. Alam, “RoBERTa-BiLSTM: A Contextaware Hybrid Model for Sentiment Analysis,” IEEE Transactions on Emerging Topics in Computational Intelligence, vol. 9, no. 6, pp. 3788–3805, 2025.

M. A. Jahin, M. S. H. Shovon, M. F. Mridha, M. R. Islam and Y. Watanobe, “A Hybrid Transformer and Attention-Based Recurrent Neural Network for Robust and Interpretable Sentiment Analysis of Tweets,” Scientific Reports, vol. 14, no. 1, 24882, 2024.

G. Kumar et al., “Combining BERT and CNN for Sentiment Analysis: A Case Study on COVID-19,” International Journal of Advanced Computer Science and Applications, vol. 15, no. 10, pp. 676-686, 2024.

H. Murfi, Syamsyuriani, T. Gowandi, G. Ardaneswari and S. Nurrohmah, “BERT-based Combination of Convolutional and Recurrent Neural Network for Indonesian Sentiment Analysis,” Applied Soft Computing, vol. 151, no. 111112, pp. 1–10, 2024.

M. Chowdhury, A. Laskar, T. Ahmad and A. T. Wasi, “MysticCIOL@DravidianLangTech 2025: A Hybrid Framework for Sentiment Analysis in Tamil and Tulu Using Fine-Tuned SBERT Embeddings and Custom MLP Architectures,” Proceedings of the Fifth Workshop on Speech, Vision, and Language Technologies for Dravidian Languages, pp. 167–172, 2025.

S. Minaee, N. Kalchbrenner, E. Cambria, N. Nikzad Khasmakhi, M. Asgari-Chenaghlu and J. Gao, “Deep Learning-based Text Classification: A Comprehensive Review,” ACM Computing Surveys, vol. 54, pp. 1-40, 2021.

Y. Kim, “Convolutional Neural Networks for Sentence Classification,” inProceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), pp. 17461751, 2014.

N. Kalchbrenner, E. Grefenstette and P. Blunsom, “A Convolutional Neural Network for Modelling Sentences,” Proceedings of the 52nd Annual Meeting of the Association for Computational Linguistics, vol. 1, pp. 655–665, 2014.

S. Hochreiter and J. Schmidhuber, “Long ShortTerm Memory,” Neural Computation, vol. 9, no. 8, pp. 1735-1780, 1997.

M. Schuster and K. K. Paliwal, “Bidirectional Recurrent Neural Networks,” IEEE Transactions on Signal Processing, vol. 45, no. 11, pp. 2673-2681, 1997.

A. Graves and J. Schmidhuber, “Framewise Phoneme Classification with Bidirectional LSTM and Other Neural Network Architectures,” Neural Networks, vol. 18, no. 5, pp. 602610, 2005.

D. E. Rumelhart, G. E. Hinton and R. J. Williams, “Learning Representations by Backpropagating Errors,” Nature, vol. 323, no. 6088, pp. 533-536, 1986.

M. Alam, A. Nuryaman, P. Khotimah, A. Parlina and A. Sihombing, “Optimizing Multi-Layer Perceptron Performance in Sentiment Classification through Neural Network Feature Extraction,” BACA: Jurnal Dokumentasi dan Informasi, vol. 46, no. 1, pp. 1-14, 2025.