Pedestrian Attribute Recognition Model for UAV Application: A Practical Approach

Main Article Content

Abstract

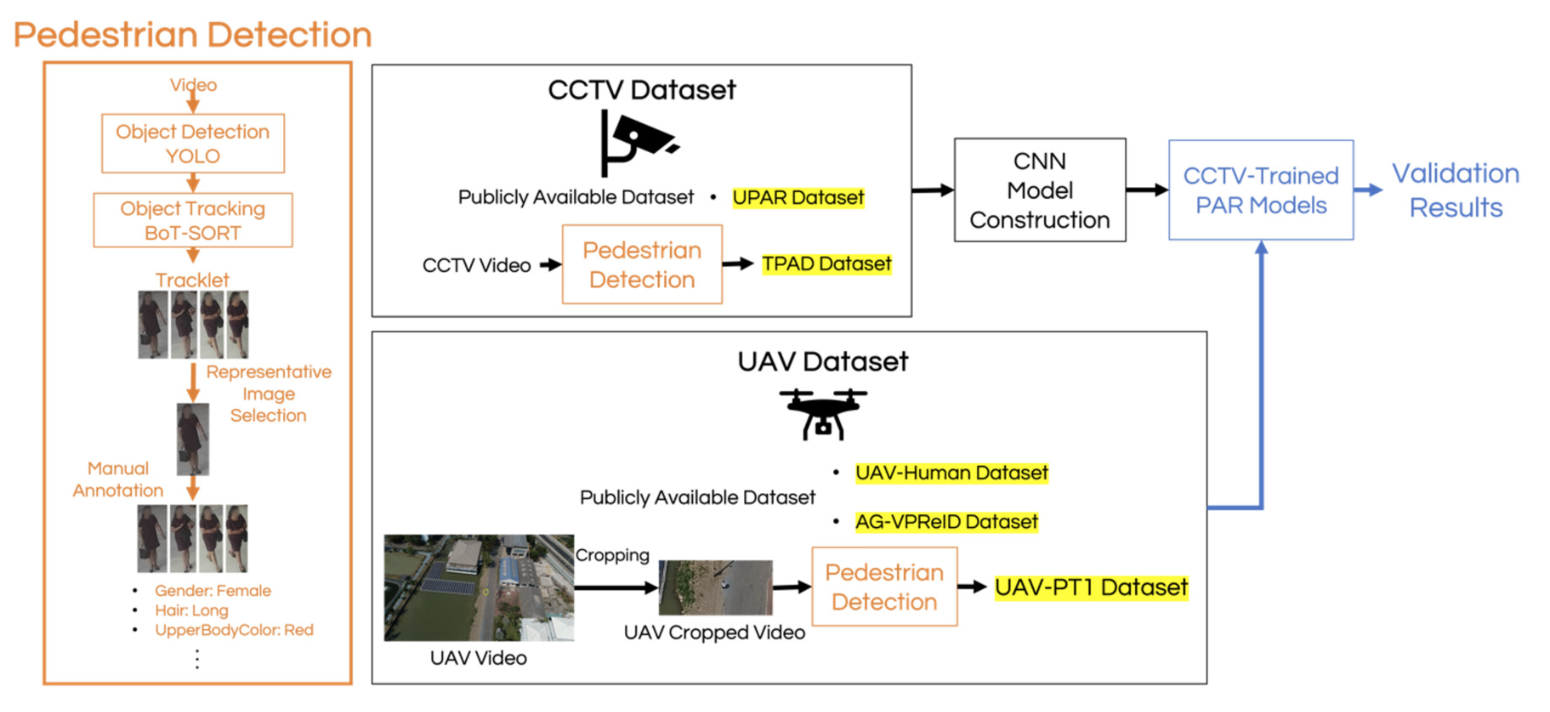

Pedestrian Attribute Recognition (PAR) is an important component of intelligent surveillance systems. Ground-to-aerial cross-domain PAR and the effect of UAV flight conditions remain largely unexplored. This work investigates whether PAR models trained on ground-level CCTV datasets can be applied to UAV imagery and quantitatively analyzes the impact of UAV elevation angle and horizontal distance on attribute recognition performance. Five CNN models are trained on two CCTV datasets with different characteristics: UPAR, a large and diverse dataset, and TPAD, a homogeneous dataset, using a multi-label classification framework with positive class weighting to handle class imbalance. Cross-dataset evaluation on CCTV data leads to the selection of RegNet and ConvNeXt. For ground- to-aerial evaluation, selected models are evaluated on three UAV datasets: UAV-Human, AG-VPReID, and a self-collected UAV-PT1 dataset, where UPAR-trained models achieve a mean attribute accuracy of 62.23-65.48%, while TPAD-trained models perform worse. RegNet achieves comparable performance to ConvNeXt with significantly lower computational complexity, making it more suitable for UAV deployment. Attribute-level analysis shows that UpperBodyLength, LowerBodyLength, LowerBodyColor, and Backpack are more reliably recognized. Further analysis using UAV-PT1 shows increasing the horizontal distance from 25 m to 50 m reduces accuracy by 11.3712.11%, and a high elevation angle of 50◦causes a significant performance drop, providing an evaluation of ground-to-aerial PAR and the impact of UAV flight parameters on attribute recognition.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

References

A. K. Sachdev, “Artificial intelligence and military aviation,” in Artificial Intelligence, Ethics and the Future of Warfare, Routledge India, pp. 91–107, 2024.

X. Wang et al., “Pedestrian attribute recognition: A survey,” Pattern Recognition, vol. 121, p. 108220, 2022.

A. G. Perera, A. Al-Naji, Y. W. Law and J. Chahl, “Human detection and motion analysis from a quadrotor UAV,” in IOP Conference Series: Materials Science and Engineering, vol. 405, no. 1, p. 012003, 2018.

M. Cormier et al., “UPAR Challenge 2024: Pedestrian Attribute Recognition and AttributeBased Person Retrieval Dataset, Design, and Results,” 2024 IEEE/CVF Winter Conference on Applications of Computer Vision Workshops (WACVW), Waikoloa, HI, USA, pp. 359-367, 2024.

K. Parattanawong, S. Erjongmanee, E. Suwanagood and C. Klumpol, “Benchmarking pedestrian attribute recognition systems for UAVs using locally collected dataset: A case study in Thailand,” in 28th International Computer Science and Engineering Conference, pp. 1–6, 2024.

T. Li, J. Liu, W. Zhang, Y. Ni, W. Wang and Z. Li, “UAV-Human: A Large Benchmark for Human Behavior Understanding with Unmanned Aerial Vehicles,” 2021 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, pp. 16261-16270, 2021.

H. Nguyen, K. Nguyen, A. Pemasiri, F. Liu, S. Sridharan and C. Fookes, “AGVPReID: A Challenging Large-Scale Benchmark for Aerial-Ground Video-based Person Re-Identification,” 2025 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Nashville, TN, USA, pp. 1241-1251, 2025.

Y. Deng, P. Luo, C. C. Loy and X. Tang, “Pedestrian attribute recognition at far distance,” in Proceedings of the 22nd ACM International Conference on Multimedia, pp. 789–792, 2014.

X. Liu et al., “HydraPlus-Net: Attentive Deep Features for Pedestrian Analysis,” 2017 IEEE International Conference on Computer Vision (ICCV), Venice, Italy, pp. 350-359, 2017.

D. Li, Z. Zhang, X. Chen and K. Huang, “A Richly Annotated Pedestrian Dataset for Person Retrieval in Real Surveillance Scenarios,” in IEEE Transactions on Image Processing, vol. 28, no. 4, pp. 1575-1590, April 2019.

L. Zheng, L. Shen, L. Tian, S. Wang, J. Wang and Q. Tian, “Scalable Person Re-identification: A Benchmark,” 2015 IEEE International Conference on Computer Vision (ICCV), Santiago, Chile, pp. 1116-1124, 2015.

D. Weng, Z. Tan, L. Fang and G. Guo, “Exploring attribute localization and correlation for pedestrian attribute recognition,” Neurocomputing, vol. 531, pp. 140–150, 2023.

A. Specker, M. Cormier and J. Beyerer, “UPAR: Unified Pedestrian Attribute Recognition and Person Retrieval,” 2023 IEEE/CVF Winter Conference on Applications of Computer Vision (WACV), Waikoloa, HI, USA, pp. 981-990, 2023.

M. S. Sarfraz, A. Schumann, Y. Wang and R. Stiefelhagen, “Deep view-sensitive pedestrian attribute inference in an end-to-end model,” arXiv preprint arXiv:1707.06089, 2017.

V. Pandey, K. Anand, A. Kalra, A. Gupta, P. P. Roy and B.-G. Kim, “Enhancing object detection in aerial images,” Mathematical Biosciences and Engineering, vol. 19, no. 8, pp. 7920–7932, 2022.

G. Tang, J. Ni, Y. Zhao, Y. Gu and W. Cao, “A survey of object detection for UAVs based on deep learning,” Mathematical Biosciences and Engineering, vol. 16, no. 1, p. 149, 2023.

J. Leng et al., “Recent advances for aerial object detection: A survey,” ACM Computing Surveys, vol. 56, no. 12, pp. 1–36, 2024.

L. Wei, S. Zhang, W. Gao and Q. Tian, “Person Transfer GAN to Bridge Domain Gap for Person Re-identification,” 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, Salt Lake City, UT, USA, pp. 79-88, 2018.

A. Grigorev, Z. Tian, S. Rho, J. Xiong, S. Liu and F. Jiang, “Deep person re-identification in UAV images,” EURASIP Journal on Advances in Signal Processing, vol. 2019, no. 54, 2019.

L. Xu, H. Peng, L. Wang and D. Xia, “Metatransfer learning for person re-identification in aerial imagery,” Computer Supported Cooperative Work and Social Computing, pp. 634–644, 2022.

J. Jin, X. Wang, Q. Zhu, H. Wang and C. Li, “Pedestrian attribute recognition: A new benchmark dataset and a large language model augmented framework,” in Proceedings of the AAAI Conference on Artificial Intelligence, vol. 39, pp. 4138–4146, 2025.

Y. Zhang et al., “ByteTrack: Multi-object tracking by associating every detection box,” Computer Vision – ECCV 2022, pp. 1–21, 2022.

N. Aharon, R. Orfaig, and B.-Z. Bobrovsky, “BoT-SORT: Robust associations for multi-pedestrian tracking,” arXiv preprint arXiv:2206.14651, 2022.

Z. Liu, H. Mao, C. -Y. Wu, C. Feichtenhofer, T. Darrell and S. Xie, “A ConvNet for the 2020s,” 2022 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), New Orleans, LA, USA, pp. 11966-11976, 2022.

M. Tan and Q. V. Le, “EfficientNetV2: Smaller models and faster training,” in Proceedings of the 38th International Conference on Machine Learning, 2021.

G. Huang, Z. Liu, L. Van Der Maaten, and K. Q. Weinberger, “Densely Connected Convolutional Networks,” in 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pp. 2261-2269, 2017.

I. Radosavovic, R. P. Kosaraju, R. Girshick, K. He and P. Doll´ar, “Designing Network Design Spaces,” 2020 IEEE/CVF Conference on Computer Vision and Pattern Recognition (CVPR), Seattle, WA, USA, pp. 10425-10433, 2020.

A. Howard et al., “Searching for MobileNetV3,” 2019 IEEE/CVF International Conference on Computer Vision (ICCV), Seoul, Korea (South), pp. 1314-1324, 2019.