Multi-Task Learning with Fusion: Framework for Handling Similar and Dissimilar Tasks

Main Article Content

Abstract

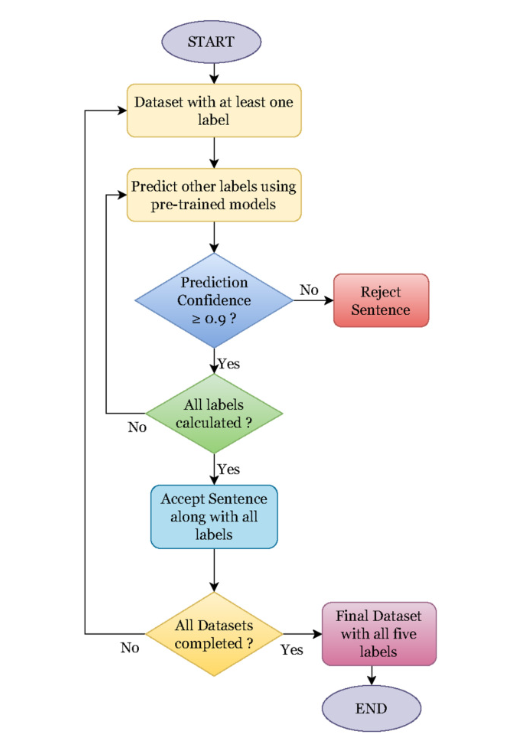

Multi-Task learning (MTL), which emerged as a powerful concept in the era of machine learning deep learning, employs a shared model trained to handle multiple tasks at simultaneously. Numerous advantages of this novel approach inspire us to instigate the insights of various tasks with similar (Identification of Sentiment, Sarcasm, Hate speech, Oensive language, etc.) and dissimilar (Identification of Sentiment, Claim, Language) genres. This paper proposes two Multi-Task Learning (MTL) framework schemes based on Bidirectional LSTM (BiLSTM) to handle both similar and dissimilar tasks. The performance of these frameworks is evaluated and compared against standalone classifiers, demonstrating their effectiveness in improving classification accuracy. In order to train our proposed MTL frameworks, different task-related publicly available datasets were collected, and each sentence was annotated with all task labels with the help of publicly available pre-trained models. Along with a simple MTL framework, this paper presents an MTL framework with a fusion technique (MTL fusion) that combines learning from task-specific layers to make predictions. Our proposed MTLfusion framework provides an F1 score of 0.76, 0.92, 0.809, 0.798, and 0.89 for sentiment, sarcasm, irony, hate speech, and offensive language classification tasks, respectively (similar tasks). It also provides an F1 score of 0.59, 0.586, and 0.707 for claim, sentiment, and language identification tasks, respectively. Our research also shows that MTL frameworks perform better than their corresponding standalone classifiers for similar tasks. On the other hand, for dissimilar tasks, the standalone classifiers perform better than MTL frameworks.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

References

Y. Y. Tan, C.-O. Chow, J. Kanesan, J. H. Chuah and Y. Lim, “Sentiment Analysis and Sarcasm Detection using Deep Multi-Task Learning,” Wireless Personal Communications, vol. 129, pp. 2213–2237, March 2023.

N. Majumder, S. Poria, H. Peng, N. Chhaya, E. Cambria and A. Gelbukh, “Sentiment and Sarcasm Classification With Multitask Learning,” in IEEE Intelligent Systems, vol. 34, no. 3, pp. 38-43, 1 May-June 2019.

F. M. P. Del Arco, S. Halat, S. Pad´o and R. Klinger, “Multi-Task Learning with Sentiment, Emotion, and Target Detection to Recognize Hate Speech and Offensive Language.,” [Online]. arXiv (Cornell University), 2021. Available from: https://doi.org/10.48550/arXiv.2109.10255

R. Kundu, Multi-Task Learning in ML: Optimization & Use Cases [Overview], 2023.

M. S. Akhtar, D. Chauhan, D. Ghosal, S. Poria, A. Ekbal and P. Bhattacharyya, “Multi-task Learning for Multi-modal Emotion Recognition and Sentiment Analysis,” in Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, vol. 1, pp. 370-379, Jun. 2019.

R. Caruana, “Multitask Learning,” Machine learning, vol. 28, pp. 41–75, Jul. 1997.

S. Ruder, “An Overview of Multi-Task Learning in Deep Neural Networks,” [Online]. arXiv [cs.CL]. 2017. Available from: https://doi.org/10.48550/arXiv.1706.05098

P. Liu, X. Qiu and X. Huang, “Recurrent neural network for text classification with multi-task learning,” [Online]. arXiv (Cornell University), 2016. Available from: https://doi.org/10.48550/arXiv.1605.05101

P. Liu, X. Qiu and X. Huang, “Adversarial Multi-task Learning for Text Classification,” in Proceedings of the 55th Annual Meeting of the Association for Computational Linguistics, vol. 1, pp. 1-10, Jul. 2017.

E. Savini and C. Caragea, “A Multi-Task Learning Approach to Sarcasm Detection (Student Abstract),” Proceedings of the AAAI Conference on Artificial Intelligence, vol. 34, no. 10, pp. 13907-13908, Apr. 2020.

P. Bojanowski, E. Grave, A. Joulin and T. Mikolov, “Enriching Word Vectors with Subword Information,” [Online]. arXiv preprint, 2016., Available from: https://doi.org/10.48550/arXiv.1607.04606

J. Pennington, R. Socher and C. Manning, “GloVe: Global Vectors for Word Representation,” in Proceedings of the 2014 Conference on Empirical Methods in Natural Language Processing (EMNLP), Doha, Qatar, pp. 1532-1543, Oct. 2014.

A. El Mahdaouy, A. El Mekki, K. Essefar, N. El Mamoun, I. Berrada and A. Khoumsi, “Deep Multi-Task Model for Sarcasm Detection and Sentiment Analysis in Arabic Language,” in Proceedings of the Sixth Arabic Natural Language Processing Workshop, Kyiv, Ukraine, pp. 334-339, Apr. 2021.

J. Devlin, M.-W. Chang, K. Lee and K. Toutanova, “BERT: Pre-training of Deep Bidirectional Transformers for Language Understanding,” in Proceedings of the 2019 Conference of the North American Chapter of the Association for Computational Linguistics: Human Language Technologies, Minneapolis, Minnesota, USA, vol. 1, pp. 4171-4186, 2019.

G. V. Singh, D. S. Chauhan, M. Firdaus, A. Ekbal and P. Bhattacharyya, “Are Emoji, Sentiment, and Emotion Friends? A Multi-task Learning for Emoji, Sentiment, and Emotion Analysis,” in Proceedings of the 36th Pacific Asia Conference on Language, Information and Computation, Manila, Philippines, pp. 166-174, 2022.

Y. Liu, M. Ott, N. Goyal, J. Du, M. Joshi, D. Chen, O. Levy, M. Lewis, L. Zettlemoyer and V. Stoyanov, “RoBERTa: A Robustly Optimized BERT Pretraining Approach,” [Online]. arXiv [cs.CL], 2019., Available from: https://doi.org/10.48550/arXiv.1907.11692

´

J. Huang, T. Liu, J. Liu, A. D. Lelkes, C. Yu and J. Han, “All Birds with One Stone: Multi-task Text Classification for Efficient Inference with One Forward Pass,” [Online]. arXiv [cs.CL], 2022. Available from: https://doi.org/10.48550/arXiv.2205.10744

F. Barbieri, J. Camacho-Collados, L. Espinosa Anke and L. Neves, “TweetEval: Unified Benchmark and Comparative Evaluation for Tweet Classification,” in Findings of the Association for Computational Linguistics: EMNLP 2020, pp. 1644-1650, 2020.

R. Misra and J. Grover, Sculpting Data for ML: The first act of Machine Learning, 2021.

R. Misra and P. Arora, “Sarcasm Detection using News Headlines Dataset,” AI Open, vol. 4, pp. 13-18, 2023.

S. Castro, D. Hazarika, V. P´erez-Rosas, R. Zimmermann, R. Mihalcea and S. Poria, “Towards Multimodal Sarcasm Detection (An Obviously Perfect Paper),” in Proceedings of the 57th Annual Meeting of the Association for Computational Linguistics, Florence, Italy, vol. 1, pp.4619-4629, Jul. 2019.

. A. Mishra, D. Kanojia and P. Bhattacharyya, “Predicting readers’ sarcasm understandability by modeling gaze behavior,” in Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence, vol. 30, no. 1, 2016.

J. Ling and R. Klinger, “An Empirical, Quantitative Analysis of the Differences Between Sarcasm and Irony,” The Semantic Web, pp. 203-216, 2016.

S. Rosenthal and K. McKeown, “Detecting Opinionated Claims in Online Discussions,” 2012 IEEE Sixth International Conference on Semantic Computing, Palermo, Italy, pp. 30-37, 2012.

D. P. Kingma and J. Ba, “Adam: A method for stochastic optimization,” [Online]. arXiv (Cornell University). 2014. Available from: https://doi.org/10.48550/arXiv.1412.6980