A unified convolution neural network for dental caries classification

Main Article Content

Abstract

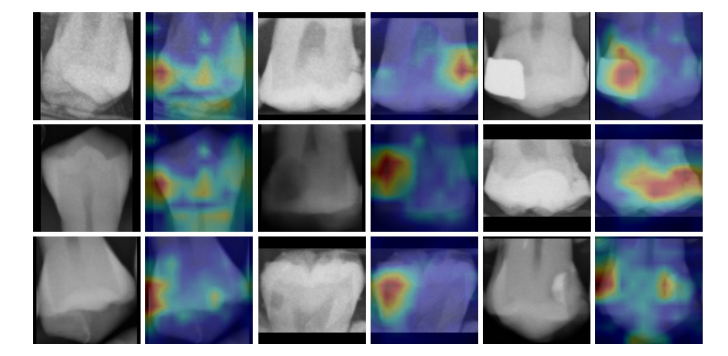

Dental caries is one of the most common chronic diseases in the oral cavity. The early detection of initial dental caries is needed for treatment. It is problematic to diagnose the initial carious lesion, as known as enamel caries, due to the similarity of a tiny hole to human perception error. In this paper, we propose a unified convolution neural network to improve the diagnostic and treatment performance for dentists using classification from bitewing radiographs. We adapt the AlexNet and ResNet models to properly classify the dental caries dataset. The modified ResNet successfully achieves excellent binary-classification performance with accuracy of 86.67%, 87.78% and 82.78% of teeth with all conditions, teeth without dental restoration, and only teeth with dental restorations, respectively. For multilevel classification, our model has good performance with 5-class average accuracy of 80%. Remarkably, our adapted ResNet-18 has good performance with enamel caries and secondary caries with accuracy of 86.67% and 77.78%, respectively. Conversely, our ResNet-50 and ResNet-101 have contradictory low performance with enamel and secondary caries but high performance with sound teeth, dentin caries and teeth with restoration of 90%, 78.89% and 88.89%, respectively. The accuracies of our model are good enough that our model could support dentists to enhance diagnostic performance.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

References

V. Baelum, J. Heidmann, and B. Nyvad, “Dental caries paradigms in diagnosis and diagnostic research,” Eur. J. Oral Sci., vol. 114, no. 4, pp. 263–277, 2006.

F. Brouwer, H. Askar, S. Paris, and F. Schwendicke, “Detecting secondary caries lesions: a systematic review and meta-analysis,” J. Dent. Res., vol. 95, no. 2, pp. 143–151, 2016.

A. G. Cantu et al., “Detecting caries lesions of different radiographic extension on bitewings using deep learning,” J. Dent., vol. 100, no. July 2020, p. 103425, 2020, doi: 10.1016/j.jdent.2020.103425.

F. Casalegno et al., “Caries Detection with Near-Infrared Transillumination Using Deep Learning,” J. Dent. Res., vol. 98, no. 11, pp. 1227–1233, 2019, doi: 10.1177/0022034519871884.

M. J. Chong, W. K. Seow, D. M. Purdie, E. Cheng, and V. Wan, “Visual-tactile examination compared with conventional radiography, digital radiography, and Diagnodent in the diagnosis of occlusal occult caries in extracted premolars,” Pediatr. Dent., vol. 25, no. 4, pp. 341–349, 2003.

P. R. da Silva, M. Martins Marques, W. Steagall Jr, F. Medeiros Mendes, and C. A. Lascala, “Accuracy of direct digital radiography for detecting occlusal caries in primary teeth compared with conventional radiography and visual inspection: an in vitro study,” Dentomaxillofacial Radiol., vol. 39, no. 6, pp. 362–367, 2010.

J. Deng, W. Dong, R. Socher, L.-J. Li, K. Li, and L. Fei-Fei, “Imagenet: A large-scale hierarchical image database,” in 2009 IEEE conference on computer vision and pattern recognition, 2009, pp. 248–255.

K. L. Devito, F. de Souza Barbosa, and W. N. F. Filho, “An artificial multilayer perceptron neural network for diagnosis of proximal dental caries,” Oral Surgery, Oral Med. Oral Pathol. Oral Radiol. Endodontology, vol. 106, no. 6, pp. 879–884, 2008, doi: 10.1016/j.tripleo.2008.03.002.

B. L. Edelstein, “The dental caries pandemic and disparities problem,” in BMC oral health, 2006, vol. 6, no. 1, pp. 1–5.

M.-A. Geibel, S. Carstens, U. Braisch, A. Rahman, M. Herz, and A. Jablonski-Momeni, “Radiographic diagnosis of proximal caries—influence of experience and gender of the dental staff,” Clin. Oral Investig., vol. 21, no. 9, pp. 2761–2770, 2017.

A. Haghanifar, M. M. Majdabadi, and S.-B. Ko, “PaXNet: Dental Caries Detection in Panoramic X-ray using Ensemble Transfer Learning and Capsule Classifier,” pp. 1–14, 2020, [Online]. Available: http://arxiv.org/abs/2012.13666

H. Hajizadeh, M. Akbari, S. Zarch, A. Nemati-Karimooy, and A. Izadjou, “Evaluation of the interpretation of bitewing radiographs in treating interproximal caries,” Eur. J. Gen. Dent., vol. 8, no. 1, p. 13, 2019.

K. He, X. Zhang, S. Ren, and J. Sun, “Deep residual learning for image recognition,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2016, pp. 770–778.

E. R. Hewlett, K. A. Atchison, S. C. White, and V. Flack, “Radiographic secondary caries prevalence in teeth with clinically defective restorations,” J. Dent. Res., vol. 72, no. 12, pp. 1604–1608, 1993.

F. Iandola, M. Moskewicz, S. Karayev, R. Girshick, T. Darrell, and K. Keutzer, “Densenet: Implementing efficient convnet descriptor pyramids,” arXiv Prepr. arXiv1404.1869, 2014.

J. R. Keenan and A. V. Keenan, “Accuracy of dental radiographs for caries detection,” Evid. Based. Dent., vol. 17, no. 2, p. 43, 2016.

A. Krizhevsky, Ii. Sulskever, and G. E. Hinton, “ImageNet Classification with Deep Convolutional Neural Networks,” Adv. Neural Inf. Process. Syst., vol. 60, no. 6, pp. 84–90, 2012, doi: 10.1145/3065386.

J. H. Lee, D. H. Kim, S. N. Jeong, and S. H. Choi, “Detection and diagnosis of dental caries using a deep learning-based convolutional neural network algorithm,” J. Dent., vol. 77, no. June, pp. 106–111, 2018, doi: 10.1016/j.jdent.2018.07.015.

L. Lian, T. Zhu, F. Zhu, and H. Zhu, “Deep learning for caries detection and classification,” Diagnostics, vol. 11, no. 9, 2021, doi: 10.3390/DIAGNOSTICS11091672.

M. Moran, M. Faria, G. Giraldi, L. Bastos, L. Oliveira, and A. Conci, “Classification of approximal caries in bitewing radiographs using convolutional neural networks,” Sensors, vol. 21, no. 15, pp. 1–12, 2021, doi: 10.3390/s21155192.

K. Moutselos, E. Berdouses, C. Oulis, and I. Maglogiannis, “Recognizing Occlusal Caries in Dental Intraoral Images Using Deep Learning,” Proc. Annu. Int. Conf. IEEE Eng. Med. Biol. Soc. EMBS, pp. 1617–1620, 2019, doi: 10.1109/EMBC.2019.8856553.

B. Mua, V. R. Fontanella, F. C. Giongo, M. Maltz, and others, “Radiolucent halos beneath composite restorations do not justify restoration replacement.,” Am. J. Dent., vol. 28, no. 4, pp. 209–213, 2015.

A. Paszke et al., “PyTorch: An Imperative Style, High-Performance Deep Learning Library,” in Advances in Neural Information Processing Systems 32, H. Wallach, H. Larochelle, A. Beygelzimer, F. dtextquotesingle Alché-Buc, E. Fox, and R. Garnett, Eds. Curran Associates, Inc., 2019, pp. 8024–8035. [Online]. Available: http://papers.neurips.cc/paper/9015-pytorch-an-imperative-style-high-performance-deep-learning-library.pdf

O. Ronneberger, P. Fischer, and T. Brox, “U-net: Convolutional networks for biomedical image segmentation,” in International Conference on Medical image computing and computer-assisted intervention, 2015, pp. 234–241.

F. Schwendicke, K. Elhennawy, S. Paris, P. Friebertshäuser, and J. Krois, “Deep learning for caries lesion detection in near-infrared light transillumination images: A pilot study,” J. Dent., vol. 92, no. December 2019, p. 103260, 2020, doi: 10.1016/j.jdent.2019.103260.

F. Schwendicke, M. Tzschoppe, and S. Paris, “Radiographic caries detection: a systematic review and meta-analysis,” J. Dent., vol. 43, no. 8, pp. 924–933, 2015.

R. R. Selvaraju, M. Cogswell, A. Das, R. Vedantam, D. Parikh, and D. Batra, “Grad-cam: Visual explanations from deep networks via gradient-based localization,” in Proceedings of the IEEE international conference on computer vision, 2017, pp. 618–626.

M. M. Srivastava, P. Kumar, L. Pradhan, and S. Varadarajan, “Detection of Tooth caries in Bitewing Radiographs using Deep Learning,” no. Nips 2017, 2017, [Online]. Available: http://arxiv.org/abs/1711.07312

C. Szegedy et al., “Going deeper with convolutions,” in Proceedings of the IEEE conference on computer vision and pattern recognition, 2015, pp. 1–9.

C. Szegedy, V. Vanhoucke, S. Ioffe, J. Shlens, and Z. Wojna, “Rethinking the Inception Architecture for Computer Vision,” Proc. IEEE Comput. Soc. Conf. Comput. Vis. Pattern Recognit., vol. 2016-Decem, pp. 2818–2826, 2016, doi: 10.1109/CVPR.2016.308.

A. Wirtz, S. G. Mirashi, and S. Wesarg, “Automatic teeth segmentation in panoramic X-ray images using a coupled shape model in combination with a neural network,” in International conference on medical image computing and computer-assisted intervention, 2018, pp. 712–719.