A Comparative Study of Rice Variety Classification based on Deep Learning and Hand-crafted Features

Main Article Content

Abstract

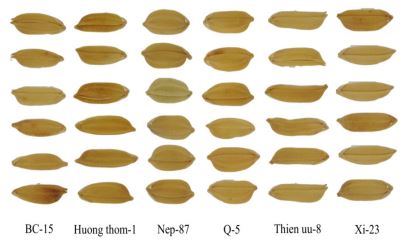

Rice is vital to people all around the world. The demand for an efficient method in rice seed variety classification is one of the most essential tasks for quality inspection. Currently, this task is done by technicians based on experience by investigating the similarity of colour, shape and texture of rice. Therefore, we propose to find an appropriate process to develop an automation system for rice recognition. In this paper, several hand-crafted descriptors and Convolutional Neural Networks (CNN) methods are evaluated and compared. The experiment is simulated on the VNRICE dataset on which our method shows a significant result. The highest accuracy obtained is 99.04% by using DenNet21 framework.

Article Details

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.

References

Juliana Gomes and Fabiana Leta. Applications of computer vision techniques in the agriculture and food industry: A review. European Food Research and Technology, 235:989–1000, 09 2014.

Diego Inácio Patrício and Rafael Rieder. Computer vision and artificial intelligence in precision agriculture for grain crops: A systematic review. Computers and Electronics in Agriculture, 153:69–81, 2018.

Avinash C. Tyagi. Towards a second green revolution. Irrigation and Drainage, 65(4):388–389, 2016.

A. Humeau-Heurtier. Texture feature extraction methods: A survey. IEEE Access, 7:8975–9000, 2019.

A. Sinha, S. Banerji, and C. Liu. Novel color Gabor-LBP-PHOG (GLP) descriptors for object and scene image classification. In Proceedings of the Eighth Indian Conference on Computer Vision, Graphics and Image Processing, page 58. ACM, 2012.

P. Vácha, M. Haindl, and T. Suk. Colour and rotation invariant textural features based on Markov random fields. Pattern Recognition Letters, 32(6):771–779, April 2011.

A. Bosch, A. Zisserman, and X. Mu˜ noz. Scene classification via pLSA. In European conference on computer vision, pages 517–530. Springer, 2006.

A. Sinha, S. Banerji, and C. Liu. New color GPHOG descriptors for object and scene image classification. Machine Vision and Applications, 25(2):361–375, February 2014.

R. Khan, J. van de Weijer, F. S. Khan, D. Muselet, C. Ducottet, and C. Barat. Discriminative color descriptors. In Proceedings of 23rd IEEE International Conference on Computer Vision and Pattern Recognition, pages 2866–2873, June 2013.

K. Meethongjan, M. Dzuikifli, P. K. Ree, and M. Y. Nam, “Fusion affine moment invariants and wavelet packet features selection for face verification.,” journal of theoretical & applied information technology, vol. 64, no. 3, 2014.

T. Mäenpää, M. Pietikainen, and J. Viertola. Separating color and pattern information for color texture discrimination. In Proceedings of the 16th IEEE International Conference on Pattern Recognition, volume 1, pages 668–671, 2002.

T. Mäenpää and M. Pietikäinen. Classification with color and texture: jointly or separately? Pattern Recognition, 37(8):1629–1640, August 2004.

Benjamaporn Lurstwut and Chomtip Pornpanomchai. Image analysis based on color, shape and texture for rice seed (Oryza sativa L. ) germination evaluation. Agriculture and Natural Resources, 51(5):383–389, October 2017.

Xiao Chen, Yi Xun, Wei Li, and Junxiong Zhang. Combining discriminant analysis and neural networks for corn variety identification. Computers and Electronics in Agriculture, 71:S48–S53, April 2010.

H.K. Mebatsion, J. Paliwal, and D.S. Jayas. Automatic classification of non-touching cereal grains in digital images using limited morphological and color features. Computers and Electronics in Agriculture, 90:99–105, January 2013.

Piotr M. Szczypínski, Artur Klepaczko, and Piotr Zapotoczny. Identifying barley varieties by computer vision. Computers and Electronics in Agriculture, 110:1–8, January 2015.

Archana A. Chaugule and Suresh N. Mali. Identification of paddy varieties based on novel seed angle features. Computers and Electronics in Agriculture, 123:415–422, 2016.

Tzu-Yi Kuo, Chia-Lin Chung, Szu-Yu Chen, Heng-An Lin, and YanFu Kuo. Identifying rice grains using image analysis and sparse representation-based classification. Computers and Electronics in Agriculture, 127:716–725, September 2016.

Hua li, Yan Qian, Peng Cao, Wenqing Yin, Fang Dai, Fei Hu, and Zhijun Yan. Calculation method of surface shape feature of rice seed based on point cloud. Computers and Electronics in Agriculture, 142:416–423, November 2017.

F. Kurtulmus¸ and H. ¨ Unal. Discriminating rapeseed varieties using computer vision and machine learning. Expert Systems with Applications, 42(4):1880–1891, March 2015.

H. Duong and V. T. Hoang. Dimensionality reduction based on feature selection for rice varieties recognition. In 2019 4th International Conference on Information Technology (InCIT), pages 199–202, Oct 2019.

R. Choudhary, J. Paliwal, and D.S. Jayas. Classification of cereal grains using wavelet, morphological, colour, and textural features of non-touching kernel images. 99(3):330–337, 2008.

Ali Douik and Mehrez Abdellaoui. Cereal grain classification by optimal features and intelligent classifiers. International Journal of Computers, Communications & Control (IJCCC), 5:506–516, 11 2010.

Seyed Jalaleddin Mousavi Rad, Fardin Akhlaghian Tab, and Kaveh Mollazade. Classification of rice varieties using optimal color and texture features and BP neural networks. In 2011 7th Iranian Conference on Machine Vision and Image Processing, pages 1–5. IEEE.

Phan Thi Thu Hong, Tran Thi Thanh Hai, Le Thi Lan, Vo Ta Hoang, Vu Hai, and Thuy Thi Nguyen. Comparative study on vision-based rice seed varieties identification. In 2015 Seventh International Conference

on Knowledge and Systems Engineering (KSE), pages 377–382. IEEE, 2015.

Hai Vu, Van Ngoc Duong, and Thuy Thi Nguyen. Inspecting rice seed species purity on a large dataset using geometrical and morphological features. In Proceedings of the Ninth International Symposium on Information and Communication Technology - SoICT 2018, pages 321–

ACM Press, 2018.

Hemad Zareiforoush, Saeid Minaei, Mohammad Reza Alizadeh, and Ahmad Banakar. Qualitative classification of milled rice grains using computer vision and metaheuristic techniques. Journal of Food Science and Technology, 53(1):118–131, January 2016.

Nitish Srivastava, Geoffrey Hinton, Alex Krizhevsky, Ilya Sutskever, and Ruslan Salakhutdinov. Dropout: A simple way to prevent neural networks from overfitting. J. Mach. Learn. Res., 15(1):1929–1958, January 2014.

Sergey Ioffe and Christian Szegedy. Batch normalization: Accelerating deep network training by reducing internal covariate shift. In Proceedings of the 32Nd International Conference on International Conference on Machine Learning - Volume 37, ICML’15, pages 448–456. JMLR.org, 2015.

Diederik Kingma and Jimmy Ba. Adam: A method for stochastic optimization. International Conference on Learning Representations, pages 00–05, 2014.

Léon Bottou. Large-scale machine learning with stochastic gradient descent. In Yves Lechevallier and Gilbert Saporta, editors, Proceedings of COMPSTAT’2010, pages 177–186, Heidelberg, 2010. Physica-Verlag HD.

Karen Simonyan and Andrew Zisserman. Very deep convolutional networks for large-scale image recognition. arXiv 1409.1556, pages 01–06, 2014.

C. Szegedy, Wei Liu, Yangqing Jia, P. Sermanet, S. Reed, D. Anguelov, D. Erhan, V. Vanhoucke, and A. Rabinovich. Going deeper with convolutions. pages 1–9, 2015.

Christian Szegedy, Vincent Vanhoucke, Sergey Ioffe, Jonathon Shlens, and Zbigniew Wojna. Rethinking the inception architecture for computer vision. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 2818–2826, 2016.

Kaiming He, Xiangyu Zhang, Shaoqing Ren, and Jian Sun. Deep residual learning for image recognition. 2016 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 770–778, 2016.

Christian Szegedy, Sergey Ioffe, and Vincent Vanhoucke. Inception-v4, inception-resnet and the impact of residual connections on learning. In AAAI, 2016.

G.Huang, Z. Liu, L. Maaten, and Kilian Q. Weinberger. Densely connected convolutional networks. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 2261–2269, 2017.

Francois Chollet. Xception: Deep learning with depthwise separable convolutions. 2017 IEEE Conference on Computer Vision and Pattern Recognition (CVPR), pages 1800–1807, 2017.

Barret Zoph, Vijay Vasudevan, Jonathon Shlens, and Quoc V Le. Learning transferable architectures for scalable image recognition. Pages 8697–8710, 2018.

Andreas Kamilaris and Francesc X Prenafeta-Boldú. Deep learning in agriculture: A survey. Computers and Electronics in Agriculture, 147:70–90, 2018.

Meng Xi, Liang Chen, Desanka Polajnar, and Weiyang Tong. Local binary pattern network: A deep learning approach for face recognition. In Image Processing (ICIP), 2016 IEEE International Conference on, pages 3224–3228. IEEE, 2016.

D. P. H. Hoai and V. T. Hoang. Features based on enhanced neighbour center different image for color texture classification. In 2019 International Conference on Multimedia Analysis and Pattern Recognition (MAPR), pages 1–6. IEEE, 2019.

D. P. H. Hoai and V. T. Hoang. Lbp-based edge information for color texture classification. Industrial Networks and Intelligent Systems, page 232.

Raquel Bello-Cerezo, Francesco Bianconi, Francesco Di Maria, Paolo Napoletano, and Fabrizio Smeraldi. Comparative evaluation of hand-crafted image descriptors vs. off-the-shelf CNN-based features for colour texture classification under ideal and realistic conditions. Applied

Sciences, 9(4):738, 2019.

N. Dalal and B. Triggs. Histograms of oriented gradients for human detection. In 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), volume 1, pages 886–893. IEEE.

David G. Lowe. Distinctive image features from scale-invariant key-points. International Journal of Computer Vision, 60(2):91–110, 2004.

Aude Oliva and Antonio Torralba. Modeling the shape of the scene: A holistic representation of the spatial envelope. page 31.

T. Ojala, T. Maenpaa, M. Pietikainen, J. Viertola, J. Kyllonen, and S. Huovinen. Outex - new framework for empirical evaluation of texture analysis algorithms. In Object recognition supported by user interaction for service robots, volume 1, pages 701–706, Quebec City, Que., Canada, 2002. IEEE Comput. Soc.

Xiaoyang Tan and Bill Triggs. Enhanced local texture feature sets for face recognition under difficult lighting conditions. 19(6):1635–1650, 2010.

Li Liu, Paul Fieguth, Yulan Guo, Xiaogang Wang, and Matti Pietikäinen. Local binary features for texture classification: Taxonomy and experimental study. Pattern Recognition, 62:135–160, February 2017.

S. Liao, M. W. K. Law, and A. C. S. Chung. Dominant Local Binary Patterns for texture classification. IEEE Transactions on Image Processing, 18(5):1107–1118, May 2009.

Z. Guo, D. Zhang, and D. Zhang. A completed modelling of local binary pattern operator for texture classification. IEEE Transactions on Image Processing, 19(6):1657–1663, June 2010.

L. Liu, S. Lao, P. W. Fieguth, Y. Guo, X. Wang, and M. Pietikainen. Median robust extended Local Binary Pattern for texture classification. IEEE Transactions on Image Processing, 25(3):1368–1381, March 2016.

Li Liu, Lingjun Zhao, Yunli Long, Gangyao Kuang, and Paul Fieguth. Extended local binary patterns for texture classification. Image and Vision Computing, 30(2):86–99, February 2012.

Oscar Deniz, Gloria Bueno, Jesús Salido, and Fernando De la Torre. Face recognition using histograms of oriented gradients. Pattern Recognition Letters, 32:1598–1603, 09 2011.

H. T. M. Nhat and V. T. Hoang. Feature fusion by using LBP, HOG, GIST descriptors and Canonical Correlation Analysis for face recognition. In 2019 26th International Conference on Telecommunications (ICT), pages 371–375, April 2019.

Surinwarangkoon T., Nitsuwat S., and Moore E. J. A traffic sign detection and recognition system. International Journal of Circuits, Systems and Signal Processing, 7(1):58-65, 2013.

William T. Freeman and Michal Roth. Orientation histograms for hand gesture recognition. Technical Report TR94-03, MERL – Mitsubishi Electric Research Laboratories, Cambridge, MA 02139, December 1994.

N. Dalal and B. Triggs. Histograms of oriented gradients for human detection. In 2005 IEEE Computer Society Conference on Computer Vision and Pattern Recognition (CVPR’05), volume 1, pages 886–893 vol. 1, June 2005.

Agnes Swadzba and Sven Wachsmuth. Indoor scene classification using combined 3d and gist features. In Ron Kimmel, Reinhard Klette, and Akihiro Sugimoto, editors, Computer Vision – ACCV 2010, volume 6493, pages 201–215. Springer Berlin Heidelberg.

Waleed Tahir, Aamir Majeed, and Tauseef Rehman. Indoor/outdoor image classification using gist image features and neural network classifiers. In 2015 12th International Conference on High-capacity Optical Networks and Enabling/Emerging Technologies (HONET), pages 1–5. IEEE, 2015.

Ivan Sikiríc, Karla Brkíc, and Sinisa Segvíc. Classifying traffic scenes using the gist image descriptor. In Croatian Computer Vision Workshop, 2013.

Vinay A., Gagana B., Vinay.S. Shekhar, Anil B., K.N. Balasubramanya Murthy, and Natarajan S. A double filtered GIST descriptor for face recognition. Procedia Computer Science, 79:533–542, 2016.

A. Vinay, B. Gagana, Vinay S. Shekhar, Vasudha S. Shekar, K. N. Balasubramanya Murthy, and S. Natarajan. Face recognition using the novel fuzzy-gist mechanism. In D. S. Guru, T. Vasudev, H.K. Chethan, and Y.H. Sharath Kumar, editors, Proceedings of International Conference on Cognition and Recognition, pages 397–406, Singapore,2018. Springer Singapore.

D.G. Lowe. Object recognition from local scale-invariant features. In Proceedings of the Seventh IEEE International Conference on Computer Vision, pages 1150–1157 vol.2. IEEE, 1999.

Seok-Wun Ha and Yong-Ho Moon. Multiple object tracking using SIFT features and location matching. International Journal of Smart Home, 5(4):10, 2011.

Cong Geng and Xudong Jiang. Face recognition using sift features. In 2009 16th IEEE International Conference on Image Processing (ICIP), pages 3313–3316, 2009.

Janez Krizaj, Vitomir Struc, and Nikola Pavesic. Adaptation of SIFT features for robust face recognition. In Aurélio Campilho and Mohamed Kamel, editors, Image Analysis and Recognition, volume 6111, pages 394–404. Springer Berlin Heidelberg, 2010.

Hasan Mahmud, Kamrul Hasan, and M A Mottalib. Hand gesture recognition using SIFT features on depth image. Proceedings of the the Ninth International Conference on Advances in Computer-Human Interactions (ACHI), page 7, 2016.

Alex Krizhevsky, Ilya Sutskever, and Geoffrey E. Hinton. ImageNet classification with deep convolutional neural networks. Communications of the ACM, 60(6):84–90, 2017.

Andrew G. Howard, Menglong Zhu, Bo Chen, Dmitry Kalenichenko, Weijun Wang, Tobias Weyand, Marco Andreetto, and Hartwig Adam. Mobilenets: Efficient convolutional neural networks for mobile vision applications. pages 00–00, 04 2017.

Lior Wolf, Tal Hassner, and Yaniv Taigman. Descriptor Based Methods in the Wild. In Workshop on Faces in ’Real-Life’ Images: Detection, Alignment, and Recognition, Marseille, France, October 2008. Erik Learned-Miller and Andras Ferencz and Frédéric Jurie.

Yimo Guo, Guoying Zhao, and Matti Pietikäinen. Texture Classification using a Linear Configuration Model based Descriptor. In Proceedings of the British Machine Vision Conference 2011, pages 119.1–119.10, Dundee, 2011. British Machine Vision Association.

T. Ojala, M. Pietikäinen, and T. Mäenpää. A generalized local binary pattern operator for multiresolution gray scale and rotation invariant texture classification. In Proceedings of the Second International Conference on Advances in Pattern Recognition, pages 397–406. Springer-Verlag, 2001.

M. Pietikäinen, T. Mäenpää, and J. Viertola. Color texture classification with color histograms and local binary patterns. In Workshop on Texture Analysis in Machine Vision, pages 109–112, 2002.

Ville Ojansivu and Janne Heikkilä. Blur Insensitive Texture Classification Using Local Phase Quantization. In David Hutchison, Takeo Kanade, Josef Kittler, Jon M. Kleinberg, Friedemann Mattern, John C. Mitchell, Moni Naor, Oscar Nierstrasz, C. Pandu Rangan, Bernhard Steffen, Madhu Sudan, Demetri Terzopoulos, Doug Tygar, Moshe Y. Vardi, Gerhard Weikum, Abderrahim Elmoataz, Olivier Lezoray, Fathallah Nouboud, and Driss Mammass, editors, Image and Signal Processing, volume 5099, pages 236–243. Springer Berlin Heidelberg, Berlin, Heidelberg, 2008.

Rodrigo Nava, Gabriel Cristóbal, and Boris Escalante-Ramírez. A comprehensive study of texture analysis based on local binary patterns. page 84360E, Brussels, Belgium, June 2012.

Rodrigo Nava, Gabriel Cristóbal, and Boris Escalante-Ramírez. Acomprehensive study of texture analysis based on local binary patterns. In Optics, Photonics, and Digital Technologies for Multimedia Applications II, volume 8436, page 84360E. International Society for Optics and Photonics, 2012.

Yan Ma. Number Local binary pattern: An Extended Local Binary Pattern. In 2011 International Conference on Wavelet Analysis and Pattern Recognition, pages 272–275, Guilin, China, July 2011. IEEE.

T. Ojala, M. Pietikainen, and T. Maenpaa. Multiresolution grayscale and rotation invariant texture classification with local binary patterns. IEEE Transactions on Pattern Analysis and Machine Intelligence, 24(7):971–987, 2002.

Mark B. Sandler, Andrew G. Howard, Menglong Zhu, Andrey Zhmoginov, and Liang-Chieh Chen. Mobilenetv2: Inverted residuals and linear bottlenecks. 2018 IEEE/CVF Conference on Computer Vision and Pattern Recognition, pages 4510–4520, 2018.